I have been working with AI tools for a while now. ChatGPT, Gemini, Copilot: most entrepreneurs and managers are familiar with them by now. But ten weeks ago I started with something that feels fundamentally different. Not a chat window where you type questions, but a working environment where AI sits as a structural layer beneath all your work. Claude Code.

Claude Code is not a web application. It installs on your computer and works from a code editor such as VS Code or via the Claude Desktop app. Claude gets direct access to your files, your systems and your tools, and can act autonomously, not merely respond.

And honestly, it has changed how I work so thoroughly that I can no longer avoid bringing it up in conversations with clients.

What changed in my working day

It started pragmatically. I wanted to spend less time on operational tasks. That worked. But what happened next, I had not expected.

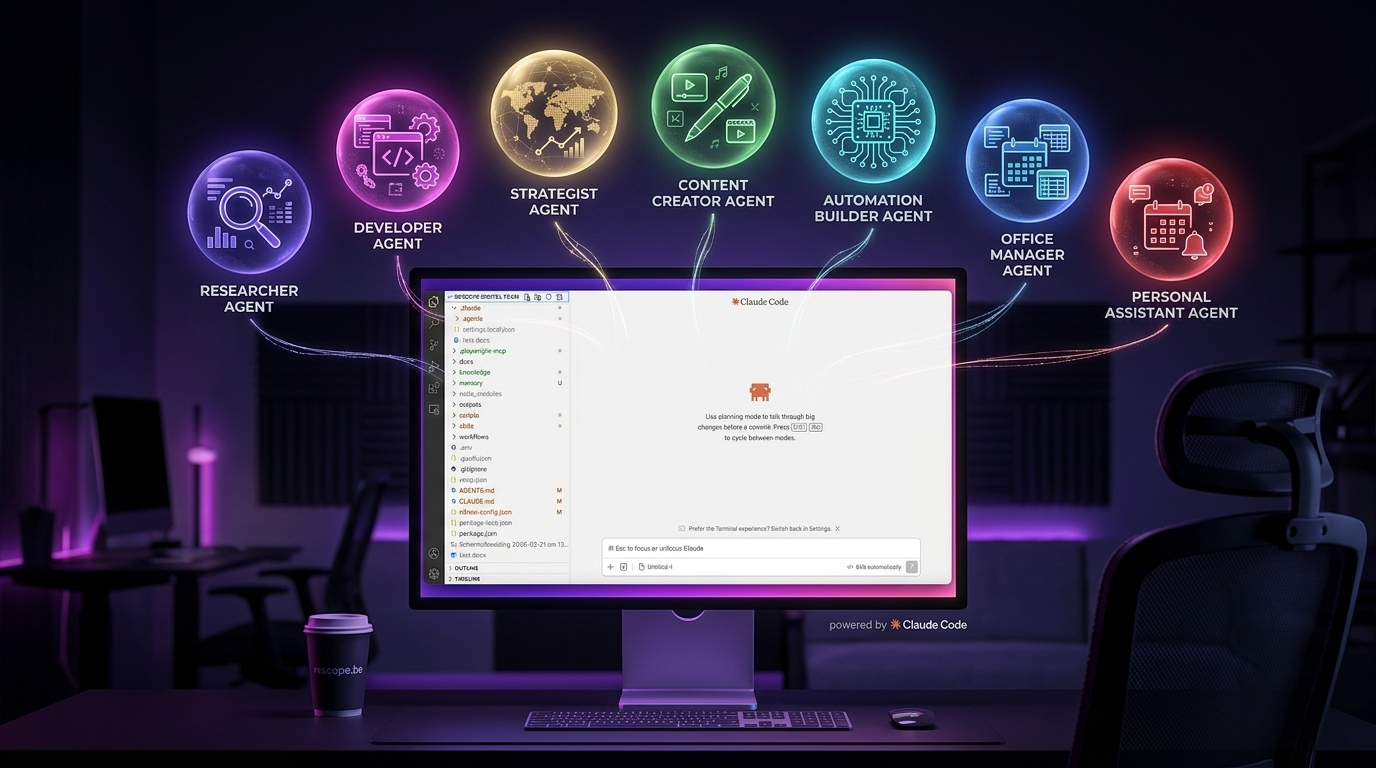

I gradually built a complete digital team. Not a metaphor — literally a set of AI colleagues with their own roles, their own knowledge, their own responsibilities:

- A researcher who follows market trends every week, spots tools and monitors AI regulation, as input for my weekly newsletter in French and Dutch.

- An automation-builder who builds, tests and connects all the associated workflows and API integrations.

- A content-creator who writes, formats, generates illustrations, builds presentations and produces videos, always in my tone of voice.

- A senior-strategist for roadmaps and competitive analysis.

- An office-manager who handles all invoicing preparation, from processing time registrations to preparing dossiers, so I only need to check and approve.

- A developer for all programming and deployment work.

- A personal-assistant for email and calendar management.

Alongside the agents there are also skills. A skill is not an agent, but a working method that is loaded into your environment: step-by-step instructions, the required reference documents and the access needed to carry out the task. You build them for tasks you repeat regularly. Once a skill exists, you execute that task without having to explain everything again. The context is baked in.

Two examples illustrate this best:

The website. The Wix licence was due for renewal. Instead, we rebuilt the entire site from scratch, with its own codebase, deployed via Vercel. Including a secured contact form and a full SEO structure. The developer agent wrote the code. I reviewed it and approved.

The Campaign Hub. Instead of a Mailchimp licence, we built our own platform, fully customised, with contact management, segmentation, automatic sign-up flows from the website and integrations with the newsletter tool and the automation layer. Every new subscriber automatically receives the right context: language, source, timestamp. It works exactly as I want it to, not as an off-the-shelf tool would allow.

That is the difference ten weeks of Claude Code makes: from user of platforms to owner of systems.

What sits underneath, and why it matters

Halfway through those ten weeks I started to understand what was happening under the bonnet. That understanding changed everything.

An AI agent is not intelligent because it is a good model. It is intelligent because the environment built around it is well constructed. The repository learn-claude-code explains this clearly across twelve progressive lessons: an agent needs a harness, the infrastructure that determines which tools it has, what knowledge it receives, how it plans and how it collaborates with other agents.

This harness consists of several layers:

Tools. An agent can do nothing without tools. What access do you give it? Read access to files? Write access? External APIs? Every tool increases its autonomy, but also increases the risk of unintended actions. You have to choose consciously.

Knowledge and context. An agent only works well when it has the right knowledge, at the right moment, in the right form. In my case there is a complete knowledge architecture behind it, comparable to a folder structure in OneDrive: process descriptions, client profiles, brand guidelines, methodologies, daily logs. The agent loads only what is relevant. Context compression is not a luxury, it is a requirement once things become more complex.

Agent or skill. Remember the distinction from the first section: a skill is a recipe with fixed steps for a predictable task. An agent runs as a separate subprocess, with its own context, its own tools and its own memory. You call an agent when a task requires its own reasoning, parallelisation or access to external systems. That distinction has direct organisational consequences: not every task calls for the same approach.

Planning. You do not execute a complex assignment in one step. A well-built agent first makes a plan, breaks it into steps, tracks its progress and marks tasks as complete. That is exactly how a good human employee works, and exactly what most AI tools today do not do.

Orchestration. This is the hardest part, and it takes experience. When do you call a skill, when do you call an agent? When do you do something in the main conversation, when as a background process? That layer disappears easily into the background, whilst it is the core of how the whole system functions. Anyone not actively attending to it is not really managing their system at all.

What I take away from these ten weeks

Ten weeks working with Claude Code has fundamentally changed how I look at AI adoption with clients. I ask different questions, at a different level.

1. Redesign your processes for digital colleagues, do not simply automate them.

Most organisations ask the wrong question. They ask: "Which existing process can we automate?" But agentic AI requires a different way of thinking. You no longer think in processes you speed up, you think in an operational whole of digital colleagues that can in principle run around the clock. Workflows become harnesses within which those colleagues can move, with the right knowledge, the right tools and the right boundaries.

Who does what? Where is the boundary between digital autonomy and human judgement? Complaint handling looks like human work, but intake, classification, routing and first response are perfectly delegable. The conversation that follows is not. Drawing that boundary deliberately is the real design task.

2. How do you encode business logic in a system?

An AI agent executes what you instruct it to do. But those instructions must contain the business logic of your organisation: how do you assess a client, what is the escalation procedure, what exceptions exist, what does your brand sound like?

That knowledge sits today in the heads of your people. Drawing it out, structuring it and translating it into something a system can use, that is not a technical task. It is an organisational task. It takes time, reflection and a willingness to be more explicit about how you actually work than you have ever had to be.

3. Do you understand the orchestration, or are you only using the interface?

Most AI tools hide complexity behind an interface. Convenient for adoption, but dangerous for optimisation. If you do not know how knowledge is loaded, how tasks are planned, how agents collaborate, you cannot see where the whole is inefficient, where it makes errors, where it can accelerate.

You have to actively go looking for that layer. Agentic AI corrects itself, repairs bugs along the way and writes fixes where needed. You receive your end result and are inclined not to worry too much about how it got there. But if the whole has been working inefficiently, has not applied smart orchestration or has repeated the same step ten times, that translates directly into an enormous token bill. Understanding how your agents reason and collaborate is therefore the only way to genuinely improve the whole.

Invest therefore not only in access to AI tools, but in understanding how they work. Train not only end users, but also the people who manage and steer the whole. Those are your AI Champions of tomorrow, not people who write good prompts, but people who grasp the system.