McKinsey published a conversation with Alexis Krivkovich in April 2026 about the "agentic organisation". The core message is clear: companies are experimenting widely with AI, but most are not yet turning it into structural value. Not because the technology is lacking, but because organisations have not adapted how they work, lead and make decisions.

That is true. But it is also a comfortable analysis from a distance.

In practice, the difficulty is not only knowing that processes need to change. The real challenge is getting an organisation to move when it does not yet feel enough pain to change. Revenue is still coming in. Customers are still there. Teams are busy. Managers are measured on this quarter's targets. So AI remains something for later, for IT, for a working group, or for the one enthusiastic colleague who is already exploring it.

That is the real gap. Not between people and technology, but between insight and behaviour.

Why AI often gets stuck

Most organisations now take AI seriously enough to experiment with it, but not seriously enough to adapt their operating model around it. That distinction matters.

Organising a workshop is manageable. Testing a few tools is too. Appointing an internal AI champion even creates a sense of movement. But as long as the underlying way of working remains intact, little changes in the organisation itself.

AI then becomes a layer on top of existing work. Employees use ChatGPT to write a text faster. A team builds a chatbot. IT tests an automation. HR organises training. All of that can be useful, but it is not transformation yet.

The uncomfortable question is not whether people use AI. The question is whether their work is organised differently because of it.

The mistake is treating AI as something that can be delegated

Many leadership teams are looking for someone to lead AI. An AI lead. A project manager. An innovation manager. A champion network. This is understandable and often necessary. But it also creates a dangerous illusion: that AI transformation can happen somewhere else in the organisation.

It cannot.

AI does not only change the work of employees. It also changes the work of the CEO, the CFO, the HR director, the operations manager and every leader who makes decisions. Leaders who delegate AI without going through the learning curve themselves turn it into a programme beside the organisation. Not a new way of working.

The CEO does not need to become a technical expert. But he or she must make visible that AI is connected to strategy, productivity, customer value and future competitiveness. Otherwise, it remains optional.

Leadership means three things here: legitimising urgency, allowing difficult conversations, and showing that one's own work is changing too.

Culture is not a soft side condition

Many AI plans underestimate culture. Not because culture is considered unimportant, but because it is often treated as something vague. In reality, culture is very concrete.

An organisation that has been rewarded for years for avoiding mistakes, following procedures and delivering predictably will not suddenly experiment because an AI tool is available. A manager who evaluates people on short-term output will not create room for learning unless that is explicitly expected. An employee who is uncertain about the future of their role will not automatically experiment openly with technology that can take over part of their work.

Curiosity does not emerge because it appears on a slide. It emerges when people are given time, safety, direction and examples.

That is why AI culture is not a communication campaign. It is how an organisation makes learning part of the work. Not beside the job, but inside the job. Not optional, but connected to real processes and real decisions.

The difficult truth about work that disappears

Many AI narratives remain too reassuring. They say AI takes over tasks so people can do more valuable work. Sometimes that is true. But not always.

Some tasks will disappear. Some roles will become smaller. Some processes will be executed with fewer people. And new roles will not automatically emerge in the same numbers as work disappears.

That is not a reason to create panic. It is a reason to communicate maturely.

People can sense it anyway. They see AI writing texts, analysing documents, preparing customer emails, comparing data, building schedules and performing quality checks. If leaders then only say that everyone will do "more strategic work", it sounds unconvincing.

The responsibility of leadership is not to reassure people with half-truths. The responsibility is to provide direction in time. Which tasks are likely to disappear? Which skills become more important? Which roles change first? Where is reskilling possible? Where will difficult choices be needed?

Honesty is not a threat to trust. Vagueness is.

From process optimisation to work redistribution

McKinsey is right to state that the greatest value comes from reimagining workflows end to end. But that too sounds easier than it is.

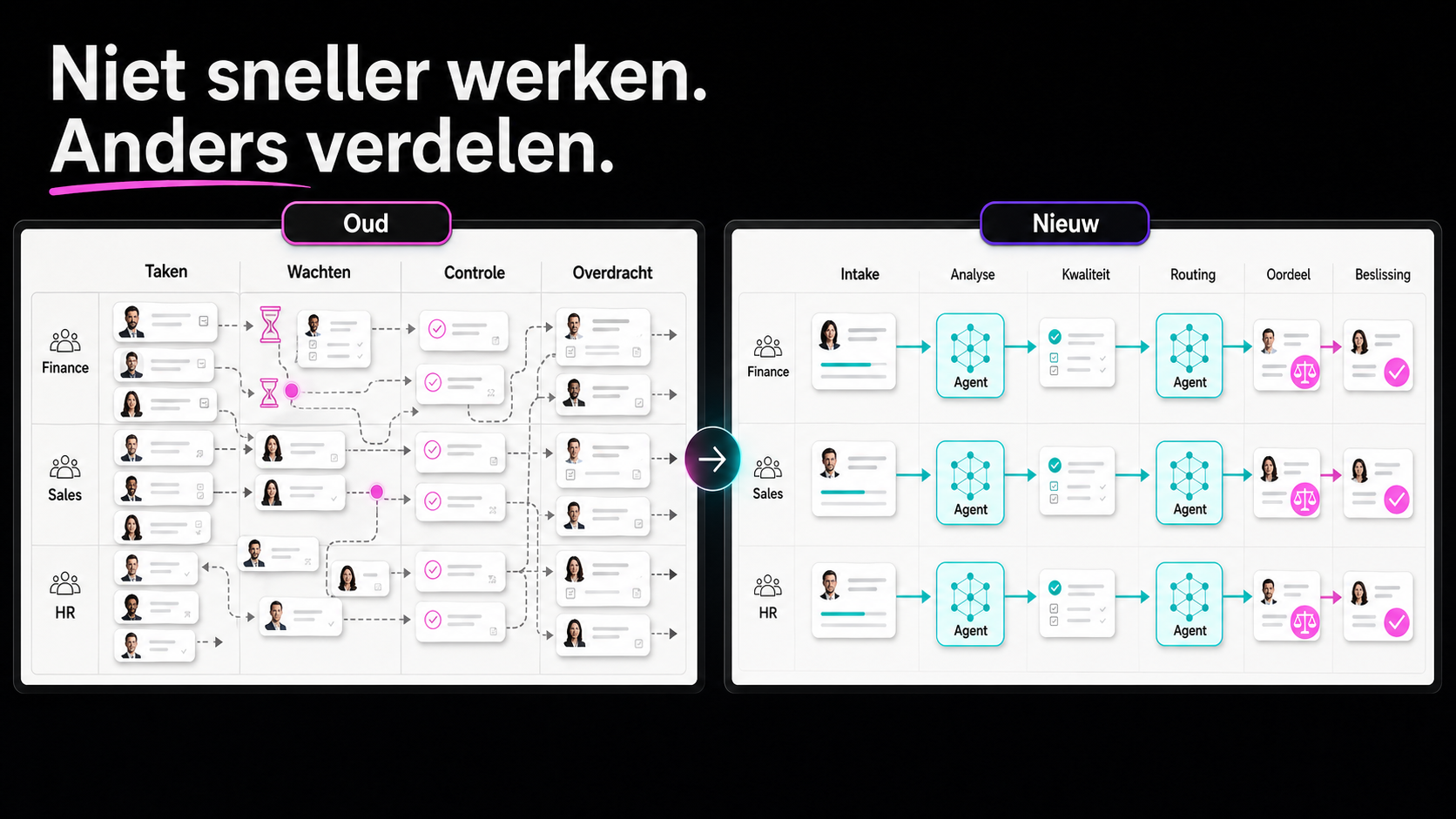

Reimagining a process does not mean taking existing steps and adding AI next to them. It means deciding again how work is distributed between people, agents and systems.

What disappears completely? What is prepared by AI? What is checked by a human? Where must a human remain inside the process? Where can a human sit above the process and mainly exercise judgement? Which decisions should never be automated? Which agent capabilities can be reused in other processes?

These are not only technical questions. They are organisational questions. They touch responsibilities, role content, reporting, risk management, training and leadership.

That is why AI fails when it is treated only as a tool project. The technology can perform a task, but the organisation must decide what that means for work, power, quality and trust.

How to truly shift an organisation

The step forward is not another inspiration session. Most leaders already know AI matters. What is missing is a way to convert abstract insight into behaviour.

A practical approach starts at the top, but it does not stop there.

- Start with the leadership team itself. Let each leader examine their own work. How are decisions prepared? Which reports are read? Which meetings consume time without value? Where can AI improve the quality of thinking, not only the speed of execution? A leadership team that does not work differently itself can hardly ask the rest of the organisation to do so credibly.

- Choose one process with real tension. Not the easiest experiment, but a process where time is lost, frustration exists, customer value is affected or multiple departments are waiting on each other. A lighthouse case should show what different work looks like, not only that AI works technically.

- Make the current work painfully visible. Map lead time, waiting time, duplicate work, handovers, manual checks and errors. Not to blame people, but to make the system visible. Without visibility into friction, change remains an opinion.

- Design the new work honestly. Divide the process into what disappears, what is taken over by AI or agents, and where human judgement becomes more important. Also name what this means for roles. Process redesign without a conversation about roles is incomplete.

- Make learning part of execution. Do not train people separately from the work, but while they are building around real processes. Let employees, leaders and experts discover together what works, what creates risk and what needs to be redesigned. AI capability grows faster when it is tied to real responsibility.

- Build governance without suffocating movement. Clear rules are needed around data, privacy, quality and human oversight. But governance must not become an excuse to do nothing. The right question is not how to avoid every risk, but how to learn to move responsibly.

The real lesson

The agentic organisation is not a future org chart. Nor is it a technology project. It is a shift in how an organisation thinks, works, learns and decides.

McKinsey rightly states that workflows, leadership and culture must change. But the reality in many organisations is more stubborn. They are not against change. They simply do not yet feel enough necessity, have little innovation muscle, and are pulled back every day into the familiar way of working.

That is why AI transformation does not start with the question of which tool you will use. Nor does it start with who will coordinate AI. It starts with a more uncomfortable question: which parts of our work are we truly willing to redistribute, and who is willing to change personally as part of that?

AI becomes strategic only when an organisation stops experimenting at the edges and starts shifting at the core.

Where in your organisation is AI still being treated as a project beside the work, while it should actually be changing the work itself?